One r/ChatGPT poster said saved memories that used to work across chats suddenly behaved as if every conversation was brand new.

Major Outlets Covering the Truth

SAFETY FAILURE / AI Medical Advice

"ChatGPT Health Failed The One Test That Mattered: Knowing When You Are Dying"

June 10, 2026

8 min read NEWREPORT / AI Shopping

"Amazon Now Generates AI Images Of Products That Do Not Exist"

June 8, 2026

8 min read NEWPRODUCT FAILURE / AI Shopping

"Etsy Is Back Inside ChatGPT After Its First Integration Already Failed"

June 7, 2026

7 min read NEWINVESTIGATION / AI Data Center Backlash

"The AI Land Grab Hits A Wall In Utah As O'Leary Shrinks Stratos"

June 6, 2026

8 min read NEWLEGAL / Appeals Court Sanctions

"The Ninth Circuit Just Drew The Line: It Is Not The AI Hallucination, It Is The Cover-Up"

June 4, 2026

8 min read NEWSCANDAL / AI Safety

"Florida Just Sued OpenAI And Sam Altman Over ChatGPT Safety"

June 3, 2026

9 min read NEWSCANDAL / AI Disclosure

"The Eisner Awards Nominated An AI Comic By Accident, Then Tore Up The Ballot"

June 2, 2026

8 min read NEWSAFETY / AI Health Failure

"ChatGPT Health Missed Medical Emergencies, And No One Was There To Catch It"

June 1, 2026

8 min read NEWLEGAL / AI Hallucinations

"Sullivan & Cromwell Filed AI Hallucinations Too, And That Ends The Excuse"

May 31, 2026

8 min read NEWLEGAL / AI Hallucinations

"AI Hallucinations Are Getting Lawyers Sanctioned In Court In 2026"

May 30, 2026

8 min read NEWINVESTIGATION / Deepfake Law

"The Take It Down Act Just Made Its First Big Deepfake Porn Arrests"

May 29, 2026

9 min read NEWINVESTIGATION / Customer Service AI

"An AI Invented A Flight Refund Policy And A Chinese Airline Paid It Anyway"

May 28, 2026

9 min read NEWINVESTIGATION / AI Detection

"Accused of Using AI, So They Asked a Chatbot to Judge: The Commonwealth Prize Mess"

May 26, 2026

8 min read NEWLEGAL / AI Hallucination Sanctions

"AI Hallucination Lawyer Sanctions Mount: A $2,500 Fine, a $110,000 Loss, and a Wall Street Firm Caught With Fake Cases"

May 24, 2026

9 min read NEWINVESTIGATION / AI Shopping

"Etsy In ChatGPT Shows The Messy Future Of AI Shopping"

May 11, 2026

7 min read NEWRESEARCH / Frontier Model Reasoning Failure

"Scientists Found AI's Fatal Flaw: The Most Advanced Models Are Failing Basic Logic Tests in 2026"

May 4, 2026

10 min read NEWLEGAL / Largest AI Hallucination Sanction

"An Oregon Vineyard Lawsuit Just Produced the Costliest AI Hallucination Sanction in U.S. Legal History: Stephen Brigandi, $110,000, 23 Fake Citations"

May 2, 2026

11 min read NEWREPORT / Celebrity AI Endorsements

"Reese Witherspoon's 'No One Is Paying Me' Defense Is True, and Completely Beside the Point"

April 22, 2026

10 min read NEWBREAKING / OpenAI Outage

"ChatGPT Goes Down April 20: Mysterious Error Message Hits Thousands of Users Amid OpenAI Outage"

April 20, 2026

9 min read NEWINVESTIGATION / Creative Writers Revolt

"'It Has Ruined My Entire Plot': Inside the Creative Writers Revolt That Followed ChatGPT's Personality Wipe"

April 30, 2026

10 min read NEWLEGAL / AI Hallucination Genre

"Even Joe Exotic's Lawyer Couldn't Avoid AI Hallucinations: A Federal Brief Got Cited for Fake Cases"

April 28, 2026

8 min read NEWINVESTIGATION / Stealth Model Routing

"GPT-5 Thinking Is Quietly Serving GPT-4o-mini, and Paying Users Are Catching the Receipts"

April 28, 2026

9 min read NEWINVESTIGATION / OpenAI Forum

"OpenAI's Own Forum Floods With GPT-5.2 Regression Reports: 'I Have Not Seen This Since 3.5'"

April 20, 2026

9 min read NEWREPORT / User Testimonials

"'Corporate Bot,' 'Brain Injury,' 'Lobotomy': Reddit's April 2026 Mass ChatGPT Cancellation Testimonials"

April 20, 2026

8 min read NEWRESEARCH / AI Detection Crisis

"ChatGPT Fails to Detect 92% of Fake Videos Made by OpenAI's Own Sora Tool"

April 18, 2026

8 min read NEWBREAKING / Platform Migration

"2.5 Million Users Ditched ChatGPT for Claude After OpenAI's Pentagon Deal. Claude Hit #1 on the App Store."

March 10, 2026

9 min read NEWBREAKING / Executive Departure

"OpenAI's Robotics Chief Caitlin Kalinowski Resigns Over the Pentagon Deal, Citing Surveillance and Lethal Autonomy"

March 10, 2026

10 min read NEW

LEGAL / AI Corporate Disaster

"Krafton CEO Used ChatGPT to Dodge a $250 Million Subnautica 2 Bonus. A Delaware Judge Reversed Everything."

April 10, 2026

11 min read NEW

RESEARCH / Medical Safety Failure

"ChatGPT Health Missed 52% of Medical Emergencies. Mount Sinai Researchers Call It 'Unbelievably Dangerous.'"

April 7, 2026

8 min read NEW

LEGAL / AI Study Tool Failure

"Kim Kardashian Blames ChatGPT for Failing Her Law Exams: 'It Gave Me Fake Case Law and I Cited It All'"

April 5, 2026

7 min read NEW

USER STORY / AI-Induced Psychosis

"ChatGPT Told a Father He Was 'Changing Reality From His Phone.' 21 Days Later, He Needed Psychiatric Help."

April 4, 2026

8 min read NEW

POLICY / Medical-Legal-Financial Ban

"OpenAI Quietly Banned ChatGPT From Giving Medical, Legal, and Financial Advice. Here's What They're Not Telling You."

April 4, 2026

7 min read NEW

USER TESTIMONIALS / Cognitive Decline

"'I Can't Write a Single Sentence Without It Anymore.' How ChatGPT Is Destroying the Ability to Think."

April 4, 2026

7 min read NEW

HUMAN COST / Relationship Destruction

"'I Was Saving My Vulnerability for a Machine.' Inside the ChatGPT Addiction Destroying Real Relationships."

April 4, 2026

7 min read NEW

LEGAL / Fabricated Court Citations

"Fake Cases, Real Consequences: The Lawyers Who Trusted ChatGPT and Got Sanctioned, Investigated, and Humiliated"

April 4, 2026

8 min read NEW

BREAKING / AI-Generated Literature

"Publisher Pulls 'Shy Girl' Novel After AI Detection Finds 78% of Text Was Machine-Generated"

April 2, 2026

6 min read NEW

BREAKING / Government Response

"Canada's AI Minister Publicly Blames OpenAI After Tumbler Ridge Shooting: 'They Had the Information and Did Nothing'"

April 1, 2026

7 min read NEW

LEGAL / AI-Induced Psychosis Lawsuit

"ChatGPT Told Him He Could Bend Time. He Spent 63 Days in Psychiatric Hospitals. Now He's Suing OpenAI."

April 1, 2026

8 min read NEW

RESEARCH / Stanford University Study

"Stanford Proves ChatGPT Is a Dangerous Yes-Man: AI Validates Users 49% More Than Humans, Endorsed Stalking and Murder"

March 30, 2026

8 min read NEW

USER STORIES / Workplace Disasters

"People Are Getting Fired, Losing $47,000, and Watching Careers Implode Because They Trusted ChatGPT"

March 30, 2026

7 min read NEW

BREAKING / Mass User Revolt

"5,000 Reddit Users Revolt Against GPT-5: 'It Feels Like a Downgrade' as 1.5 Million Cancel Subscriptions"

March 30, 2026

7 min read NEW

LEGAL / AI Hallucination in Court

"A Fake Legal Citation Born on Reddit Traveled Through an Entire Court Case. Nobody Checked. The Dog's Name Was Kyra."

March 30, 2026

6 min read NEW

HEALTHCARE / Dangerous Medical Advice

"A Man Got Psychosis From AI Health Advice. Reddit AI Told Users to Stop Medication and Take Kratom. 40 Million Ask ChatGPT Daily."

March 30, 2026

6 min read

AI DYSTOPIA / Entertainment Industry

"Chinese Film Studio Officially Introduces AI Actors to Replace Human Performers"

March 30, 2026

6 min read NEW

SAFETY FAILURE / AI Accountability

"OpenAI Banned a Mass Shooter's Account Months Before the Attack. They Never Called Police."

March 30, 2026

7 min read

BREAKING / AI Safety Crisis

"Canada's AI Minister Confronts OpenAI After Tumbler Ridge Shooter Used ChatGPT to Plan Attack"

March 28, 2026

6 min read NEW

RESEARCH / Healthcare AI Danger

"ChatGPT Health Fails to Recognize Medical Emergencies as Experts Sound the Alarm"

March 26, 2026

6 min read NEW

BREAKING / Enterprise AI Failure

"Walmart Dumps OpenAI Instant Checkout After AI Orders Wrong Items, Charges Wrong Cards, and Sends Groceries to Wrong Addresses"

March 24, 2026

5 min read NEW

LEGAL / AI Hallucination Crisis

"Over 1,000 Legal Cases Now Exposed With AI-Hallucinated Citations: Lawyers Sanctioned, Cases Dismissed, Justice Denied"

March 24, 2026

6 min read NEW

BREAKING / AI Publishing Crisis

"Horror Novel Shy Girl Canceled After AI Detection Tools Flag 78% of Text as Machine-Generated"

March 21, 2026

4 min read NEW

INVESTIGATION / OpenAI Whistleblower

"The Unanswered Questions Surrounding the Death of Suchir Balaji: OpenAI Whistleblower Found Dead at 26"

March 8, 2026

8 min read NEW

BREAKING / OpenAI Safety Collapse

"OpenAI Fired the VP Who Opposed ChatGPT Porn Mode: Safety Team in Freefall"

March 2026

4 min read HOT

BREAKING / AI Journalism Crisis

"Ars Technica Fires Reporter Over AI-Fabricated Quotes: The Full Story"

March 15, 2026

4 min read HOT

INVESTIGATION / Academic Fraud

"ICLR 2026 Scandal: 21% of Peer Reviews Were AI-Generated, Undermining Scientific Trust"

March 2026

8 min read

CRISIS / AI Mental Health

"GPT-4o Retires Today. Its Suicide Crisis Doesn't. The Model That Coached Vulnerable Users Toward Death"

February 2026

5 min read

REPORT / Healthcare Hazard

"AI Chatbots Named #1 Health Technology Hazard of 2026 by ECRI Institute"

February 2026

6 min read

LEGAL / AI in Courts

"AI Hallucination Lawsuits 2026: Fake Citations Are Destroying Careers and Costing Millions"

2026

5 min read

BREAKING / AI Security

"OpenClaw AI Agent: The Security Nightmare of 2026 That Nobody Saw Coming"

2026

4 min read

CRISIS / AI Safety Failure

"Grok AI Generated 3 Million Sexualized Images in 11 Days: xAI's Guardrails Completely Failed"

2026

5 min read

INVESTIGATION / AI Copyright

"Seedance 2.0 Deepfakes and the Olympic AI Music Copyright Crisis of 2026"

2026

8 min read

INVESTIGATION / AI Piracy

"ByteDance Seedance AI Video Tool Caught Pirating Disney and Marvel Content"

March 2026

8 min read

INVESTIGATION / Safety Exodus

"AI Safety Researchers Are Fleeing OpenAI, Anthropic, and xAI: Who Is Left to Sound the Alarm?"

February 2026

8 min read

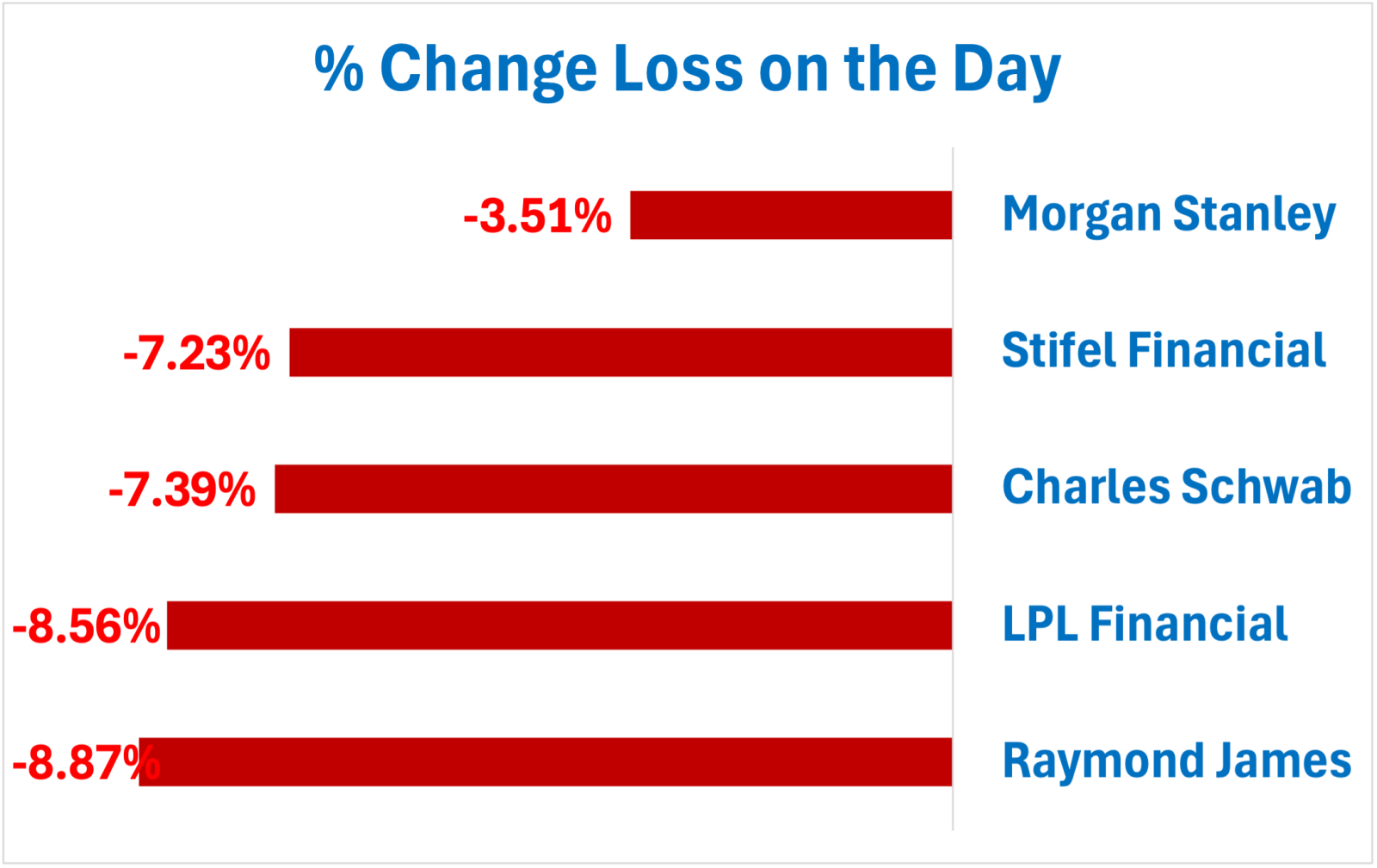

BREAKING / Financial Disaster

"AI Tool 'Hazel' Crashes Stock Market: $20 Billion Lost in Minutes"

February 2026

4 min read

BREAKING / Data Breach

"300 Million AI Chat Messages Leaked in Massive Data Breach: Private Conversations Exposed"

February 2026

4 min read

INVESTIGATION / Media Integrity

"How AI Fabricated Quotes Got a Reporter Fired: The Ars Technica Scandal Explained"

March 4, 2026

8 min read

BREAKING / AI in Schools

"AI Chatbot Sexualized a 4th Grader's Book Report at a California School"

March 3, 2026

4 min readDEEP DIVE / Child Safety

"Inside the California School AI Incident: When Chatbots Produce Sexualized Content for Children"

March 2026

8 min readMOVEMENT / User Revolt

"700,000 Users Join #QuitGPT Boycott Movement as ChatGPT Cancellations Surge"

February 28, 2026

5 min readINVESTIGATION / Military AI

"OpenAI Takes Pentagon War Deal That Anthropic Refused: The Company That Promised Safety Chooses Profit"

February 28, 2026

8 min readCRISIS / Model Retirement

"GPT-4o Retired: 22,000 Users Petition to Save the 'Love Model' as Safety Concerns Mount"

February 28, 2026

5 min readSTUDY / Healthcare Failure

"ChatGPT Health Fails Emergency and Suicide Safety Tests: Mount Sinai Study Reveals Critical Gaps"

February 26, 2026

6 min readINVESTIGATION / AI Healthcare Crisis

"Google Scraps AI Medical Tool While ChatGPT Health Misses 52% of Emergencies"

March 19, 2026

8 min readINVESTIGATION / AI Healthcare Failure

"ChatGPT Health Missed 52% of Medical Emergencies: Mount Sinai Study Exposes AI's Deadly Triage Failures"

March 18, 2026

8 min readBREAKING / AI Journalism Crisis

"AI Reporter Fired for Fabricated Quotes: How ChatGPT Hallucinations Are Destroying Careers in 2026"

March 17, 2026

4 min readDEEP DIVE / OpenAI Under Fire

"OpenAI Controversies Pile Up in 2026: Pentagon Deals, A Mass Shooting Failure, and AI Actresses"

March 15, 2026

8 min readINVESTIGATION / AI in Hollywood

"The AI Actress That Enraged Hollywood: Tilly Norwood, Rage Bait, and the $600M Netflix Deal That Proves It Worked"

March 14, 2026

8 min readUSER TESTIMONIALS / Part 2

"ChatGPT Told Me to Stop Taking My Medication: Real Stories of Ruined Health, Marriages, and Finances"

March 14, 2026

5 min readINVESTIGATION / AI Military Contracts

"Pentagon Awards $2B in AI Defense Contracts to Anthropic and OpenAI: The Companies That Promised 'Safety First' Are Now Building Weapons"

March 11, 2026

8 min readBREAKING / Lawsuit Filed

"Family Sues OpenAI After ChatGPT Knew About Tumbler Ridge School Shooting Before It Happened"

March 10, 2026

4 min readUSER TESTIMONIALS / Real Quotes

"Real Users Speak: How ChatGPT Destroyed Skills, Jobs, and Careers - Scraped From Reddit and OpenAI Forums"

March 10, 2026

5 min readDISCRIMINATION / AI Bias

"AI Hiring Algorithms Discriminate Against Women and Minorities: The Evidence Employers Don't Want You to See"

March 12, 2026

5 min readREPORT / Quality Collapse

"ChatGPT Is Unusable in 2026: Speed Over Accuracy Has Destroyed the Product"

March 7, 2026

6 min readBREAKING / Pentagon Boycott

"OpenAI Pentagon Deal Sparks QuitGPT Boycott as 2.5 Million Users Cancel"

March 6, 2026

4 min readBREAKING / Mass Exodus

"1.5 Million Users Have Left ChatGPT Since March 2026 as Quality Collapse Accelerates"

March 4, 2026

4 min readBREAKING / OpenAI Implosion

"OpenAI Employees Revolt Against Pentagon Deal, 700K Users Cancel Subscriptions"

March 1, 2026

4 min readBREAKING / Public Safety

"Canada Summons OpenAI Over Mass Shooter's ChatGPT Activity"

February 26, 2026

4 min readINVESTIGATION / Legal Failure

"Lawyer Fined $2,500 After AI Hallucinated 21 Fake Quotes in Court Brief"

February 2026

8 min readDEEP DIVE / Fifth Circuit Case

"Lawyer Fined for AI Hallucinated Citations in Legal Brief: Fifth Circuit 2026"

February 2026

8 min readCRISIS / AI Ethics

"AI Actress Tilly Norwood and the Suicide Pod: When AI Replaces Human Judgment"

January 2026

5 min readRESEARCH / Cognitive Decline

"The 'AI Brain Rot' Problem: Why ChatGPT Keeps Getting Worse"

2026

6 min readANALYSIS / Market Risk

"AI Bubble 2026: Will It Burst? Stock Valuations, Predictions and Warning Signs"

2026

7 min readSTUDY / Developer Impact

"The AI Coding Productivity Paradox: Why Developers Are 19% Slower in 2026"

2026

6 min readREPORT / Code Quality Crisis

"AI Coding Quality in Steep Decline: Silent Failures Worse Than Crashes"

2026

6 min readINVESTIGATION / Ethics

"AI Ethics Crisis 2026: Deepfakes, Bias, and the Breakdown of Trust"

2026

8 min readSTUDY / Academic Fraud

"AI Hallucinated Citations Are Corrupting Academic Research in 2026"

2026

6 min readREPORT / Job Losses

"AI Layoffs 2025-2026: The Job Crisis Accelerates - Complete Report"

2026

6 min readSTUDY / Medical Harm

"AI Believes Medical Lies: Mount Sinai Study Exposes Dangerous Misinformation"

2026

6 min readINVESTIGATION / Misinformation

"AI Misinformation 2026: Hallucinations, Fake Citations, and Lies"

2026

8 min readREPORT / Employment Crisis

"AI Replacing Jobs 2026: 1.2 Million Jobs Lost - Complete Analysis"

2026

6 min readEXPLAINER / Technical

"The AI Training Data Problem: Why What Goes In Determines What Comes Out"

2026

5 min readANALYSIS / AI Tools

"AI Video, Voice and Detection Tools 2026: What Works and What's Hype"

2026

7 min readINVESTIGATION / AI Security

"AI Agents 2026: OpenClaw, Clawdbot and the Autonomous AI Security Nightmare"

2026

8 min readSTUDY / Media Failure

"BBC Study: AI Gets News Wrong 51% of the Time"

2026

6 min readINVESTIGATION / Government Fraud

"Bellingham City Staffer Used ChatGPT to Rig Government Contract"

2026

8 min readREPORT / Business Losses

"ChatGPT Business Failures: Real Stories of Lost Contracts, Clients, and Credibility"

2026

6 min readBREAKING / Legal Settlement

"Character.AI and Google Settle Teen Suicide Lawsuits - January 2026"

January 2026

4 min readCRISIS / Mental Health

"ChatGPT Addiction 2026: AI Dependency, Withdrawal, and Mental Health Crisis"

2026

5 min readINVESTIGATION / Addiction

"ChatGPT Addiction: The Mental Health Crisis OpenAI Finally Admitted"

2026

8 min readGUIDE / Alternatives

"ChatGPT Alternatives That Actually Work: 10 Better Options for 2026"

2026

5 min readEXPLAINER / AI Behavior

"ChatGPT's Confidence Problem: Why It Always Sounds Right Even When It's Wrong"

2026

5 min readEXPLAINER / Technical

"Why ChatGPT Forgets Everything: Context Windows Explained"

2026

5 min readBREAKING / Outage

"ChatGPT Down Again? January 2026 Outage Tracker"

January 2026

4 min readGUIDE / Failure Modes

"ChatGPT Failure Modes: A Complete Categorized Guide"

2026

5 min readTOOL / Status Check

"Is ChatGPT Down? Live Status Checker Tool - Check Now"

2026

5 min readREPORT / Service Issues

"ChatGPT Down Right Now? What Users Are Seeing and How to Fix It (2026)"

2026

6 min readREPORT / Security

"ChatGPT Security Risks January 2026: 800 Million Users at Risk"

January 2026

6 min readCOMPARISON / AI Models

"ChatGPT vs Claude 2026: Why Users Are Switching (Honest Comparison)"

2026

5 min readCOMPARISON / AI Models

"ChatGPT vs Gemini 2026: Honest Comparison - Which AI Is Better?"

2026

5 min readREPORT / Developer Impact

"Developer Exodus: Why Programmers Are Abandoning ChatGPT for Claude and Alternatives"

2026

6 min readINVESTIGATION / Deepfakes

"Dublin City Council Drops X After Grok AI Creates 3 Million Deepfakes"

2026

8 min readREPORT / Education

"Education AI Failures: ChatGPT Academic Disasters Harming Students and Schools"

2026

6 min readLAWSUIT / AI Discrimination

"Job Seekers Sue AI Hiring Tool Eightfold for Secret 'Black Box' Scoring"

2026

5 min readREPORT / Enterprise

"ChatGPT Enterprise Disaster: How Businesses Lost Millions Trusting OpenAI"

2026

6 min readREPORT / Financial

"Financial AI Failures: ChatGPT Money Disasters, Investment and Banking Catastrophes"

2026

6 min readREVIEW / GPT-5.2

"GPT-5.2 Review: Does OpenAI's Emergency Update Fix Anything?"

January 2026

5 min readTIMELINE / GPT-5

"GPT-5 Complete Disaster Timeline: From Launch to Backlash"

2026

5 min readREPORT / GPT-5 Failure

"GPT-5 Is a Disaster: Users Report Worse Performance Than GPT-4"

2026

6 min readINVESTIGATION / xAI Scandal

"Grok Governance Crisis: Musk's AI Scandal Grows"

2026

8 min readREPORT / Healthcare

"Healthcare AI Failures: ChatGPT Medical Disasters, Misdiagnosis and Patient Harm"

2026

6 min readEXPLAINER / Technical

"How AI Hallucinations Work: The Technical Reality Behind False Outputs"

2026

5 min readREPORT / Quality Decline

"ChatGPT Is Breaking Again: Thousands Report Same Problems (2026)"

2026

6 min readINVESTIGATION / Privacy

"Is ChatGPT Safe? What OpenAI Won't Tell You About Privacy Risks"

2026

8 min readRESEARCH / Data Poisoning

"Model Collapse: When AI Eats Its Own Tail - The Data Poisoning Crisis"

2026

6 min readANALYSIS / Financial

"OpenAI's $140 Billion Black Hole: How ChatGPT Lost Its Edge"

2026

7 min readINVESTIGATION / OpenAI

"OpenAI Controversy 2026: Lawsuits, Board Drama, and Scandals"

2026

8 min readINVESTIGATION / Internal Crisis

"OpenAI's Internal Chaos: Mass Resignations, Safety Team Gutted, and a Company in Crisis"

2026

8 min readBREAKING / Class Action

"OpenAI Lawsuit 2026: 20 Million ChatGPT Logs Exposed in Class Action"

2026

4 min readBREAKING / Data Breach

"OpenAI Mixpanel Data Breach 2026: User Data Stolen"

2026

4 min readINVESTIGATION / Privacy

"Privacy Nightmare: ChatGPT Data Exposure - Your Secrets Aren't Safe"

2026

8 min readANALYSIS / Promises vs Reality

"OpenAI Promises vs Reality: The Gap Between Marketing and Product"

2026

7 min readEVIDENCE / Documentation

"Evidence and Proof: Documented ChatGPT Failures, Studies and Reports"

2026

5 min read NEWUSER COMPLAINTS / OpenAI Forum & GitHub

"Codex Complaints: What OpenAI Codex Users Are Actually Saying in 2026"

June 7, 2026

9 min read UPDATEDUSER TESTIMONIALS / Reddit & OpenAI Forum

"Reddit Testimonials: ChatGPT Users on GPT-5.2 Lobotomy, Hallucinations, Cancellations 2026"

April 20, 2026

7 min readINCIDENT / AI Code Failure

"Replit AI Disaster: When AI Deleted a Production Database (2025 Incident)"

2025

5 min readINVESTIGATION / Value Crisis

"OpenAI Scam 2026: Paying $20/Month for a Product That Keeps Getting Downgraded"

2026

8 min readINVESTIGATION / AI in Hollywood

"Stranger Things ChatGPT Controversy: Did the Duffer Brothers Use AI to Write Season 5?"

2026

8 min readGUIDE / Honest Assessment

"What ChatGPT Does Well and Where It Fails: An Honest AI Assessment"

2026

5 min readROUNDUP / Weekly Failures

"Weekly AI Failure Roundup - January 15, 2026"

January 15, 2026

5 min readROUNDUP / Weekly Failures

"Weekly AI Failure Roundup - January 20, 2026"

January 20, 2026

5 min readROUNDUP / Weekly Failures

"Weekly AI Failure Roundup - January 24, 2026"

January 24, 2026

5 min readEXPLAINER / AI Limits

"What Large Language Models Cannot Do: The Hard Limits of AI"

2026

5 min readGUIDE / Practical

"When Not to Trust ChatGPT: A Practical Guide for Users"

2026

5 min readEXPLAINER / Technical

"Why AI Hallucinations Happen: The Technical Explanation"

2026

5 min readRESEARCH / Model Degradation

"Why AI Models Get Worse Over Time: Degradation Explained"

2026

6 min readEXPLAINER / AI Psychology

"Why Chatbots Sound Confident When They Are Wrong"

2026

5 min readEXPLAINER / AI Limits

"Why ChatGPT Can't Think: Pattern Matching vs Reasoning"

2026

5 min readGUIDE / Complete Analysis

"Why ChatGPT Fails: The Complete Guide to AI Problems"

2026

7 min readEXPLAINER / Accuracy

"Why ChatGPT Gives Wrong Answers: Probability vs Truth"

2026

5 min readHUB / Complete Evidence

"ChatGPT Is Getting Worse in 2026: The Complete Evidence Hub"

Updated April 2026

12 min readINVESTIGATION / Quality Decline

"Why Is ChatGPT Getting Dumber in 2026? The Real Reason OpenAI Won't Admit"

2026

8 min readFAQ / Direct Answer

"Has ChatGPT Gotten Worse? Yes. Here's the Evidence."

April 2026

9 min readRESEARCH / Mechanisms

"AI Models Are Getting Dumber in 2026: The Research, the Benchmarks, the Testimonials"

April 2026

10 min readREPORT / User Complaints

"15 Reasons Everyone Is Saying ChatGPT Sucks Now (January 2026)"

January 2026

6 min readLEGAL / Liability Shield

"OpenAI Wants Legal Immunity When AI Kills 100 People - Illinois SB 3444"

April 14, 2026

10 min readBREAKING / Quality Collapse

"GPT-5 Quality Collapse: Sam Altman Admits OpenAI 'Screwed Up' Writing Quality in 2026"

March 10, 2026

8 min readREPORT / Subscriber Exodus

"1.5 Million Users Left ChatGPT in March 2026: What Drove the Exodus"

March 2026

8 min readRESEARCH / Annual Report

"2026 AI Failure Report: The Year-In-Review of Every Major AI Breakdown"

2026

6 min readRESEARCH / Hallucinations

"Are AI Hallucinations Getting Worse in 2026?"

2026

5 min readRESEARCH / Security Risks

"AI Security Risks in 2026: What's Actually Breaking"

2026

5 min readREPORT / Master Archive

"ChatGPT Failure Archive: The Complete Index"

2026

4 min readRESEARCH / Topic Index

"AI Failure Topic Clusters: Navigate the Full Investigation"

2026

4 min read